Key Takeaway

Nvidia (NVDA) enters its most important week of 2026 with the GTC developer conference set to begin March 16, where CEO Jensen Huang has teased a "world-surprising" chip announcement that could mark the definitive shift from generative chatbots to fully autonomous "agentic" AI systems. The stock has pulled back approximately 11% from its October 2025 all-time high of $207, creating what analysts view as an attractive entry point ahead of potentially transformative product unveilings.

Wall Street remains overwhelmingly bullish despite recent volatility, with 38 analysts maintaining Strong Buy ratings and consensus price targets ranging from $263 to $274—representing 42-48% upside from current levels around $185. The upcoming Vera Rubin architecture, Blackwell ramp acceleration, and Nvidia's expanding software platform strategy position the company to capture an outsized share of the projected $650 billion Big Tech AI infrastructure spending wave in 2026.

For investors seeking exposure to the AI revolution's infrastructure layer, Nvidia continues to offer the purest play with the strongest competitive moat, though geopolitical risks around China export restrictions and margin pressure during product transitions warrant careful monitoring.

The GTC 2026 Catalyst: What Jensen Huang's "World-Surprising" Chip Means for Investors

Nvidia's GPU Technology Conference (GTC) has earned its reputation as the "AI Super Bowl," and this year's event carries unusually high stakes for NVDA shareholders. In a rare pre-conference blog post, Jensen Huang positioned artificial intelligence as "the largest infrastructure build-out in human history"—a bold framing that sets expectations for major announcements extending beyond incremental hardware updates.

The centerpiece speculation revolves around a "world-surprising" chip that analysts believe could represent Nvidia's answer to the growing demand for agentic AI systems. Unlike current generative models that respond to prompts, agentic AI operates autonomously, executing complex multi-step tasks with minimal human intervention. This evolution requires fundamentally different computing architectures optimized for inference rather than training, potentially opening entirely new addressable markets for Nvidia.

Several technical possibilities have emerged from supply chain and industry sources. The most intriguing rumor suggests Nvidia may unveil a dedicated optical-compute chip or Co-Packaged Optics (CPO) switch technology that replaces traditional copper wiring with light-based data transmission within server racks. Such innovation would address one of the most pressing constraints in modern AI infrastructure: energy consumption and heat generation at scale. By reducing power requirements while dramatically increasing bandwidth, optical computing could enable the gigawatt-scale AI factories that Huang envisions.

Additionally, the Vera Rubin architecture—named after the pioneering astronomer who discovered dark matter—has reportedly entered full-scale mass production. Industry analysts expect Huang to claim a 10x reduction in inference token costs with Rubin, a breakthrough that would democratize advanced AI by making production deployment significantly more economical. If delivered, this cost reduction could accelerate enterprise AI adoption far beyond current projections.

The timing matters enormously for investors. GTC's keynote on March 17 coincides with the Federal Reserve's March meeting, creating a potential volatility cocktail. However, UBS analyst Timothy Arcuri maintains that Nvidia has "upside bias" heading into the event, having recently reiterated his Buy rating and $245 price target following discussions with CFO Colette Kress.

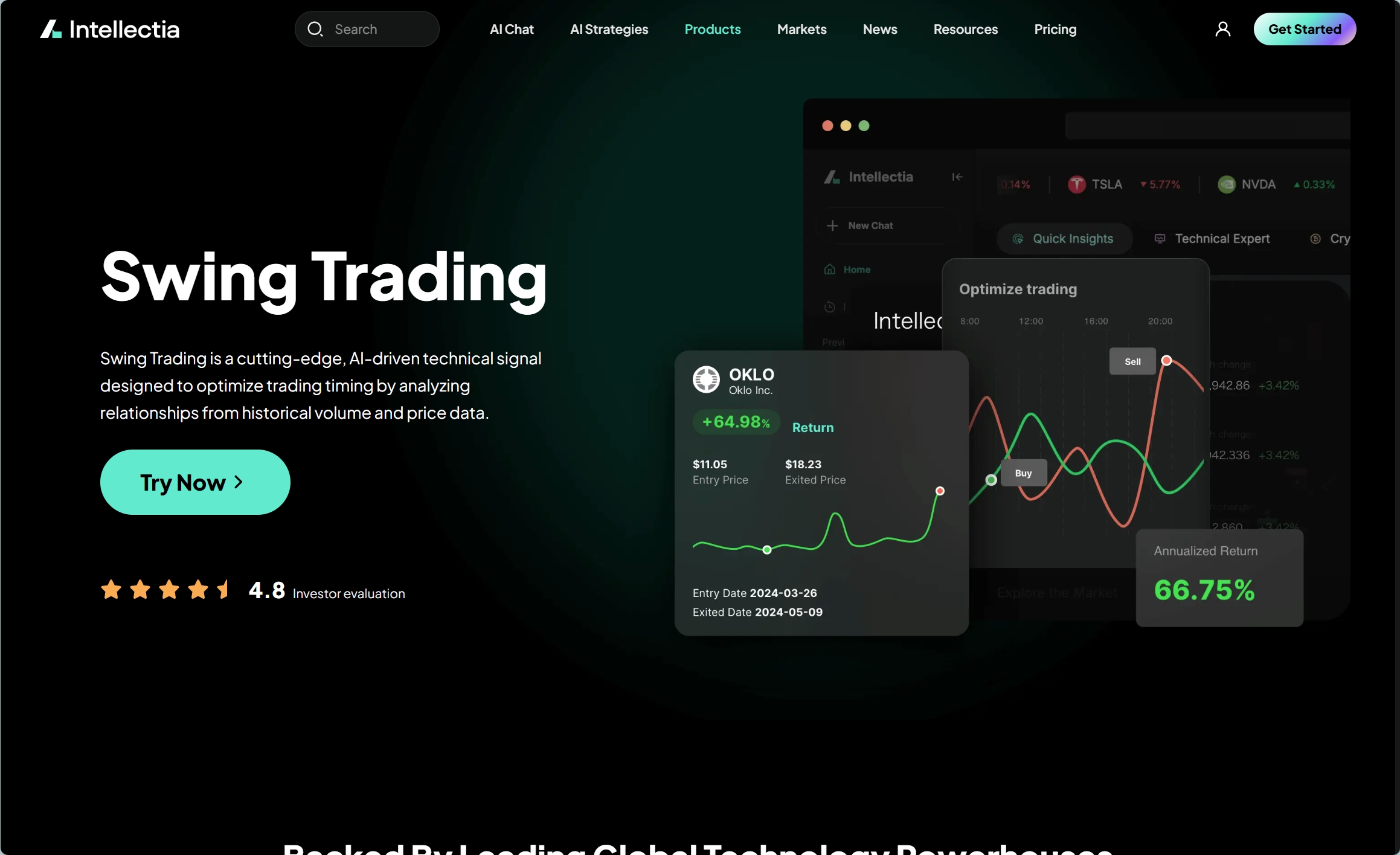

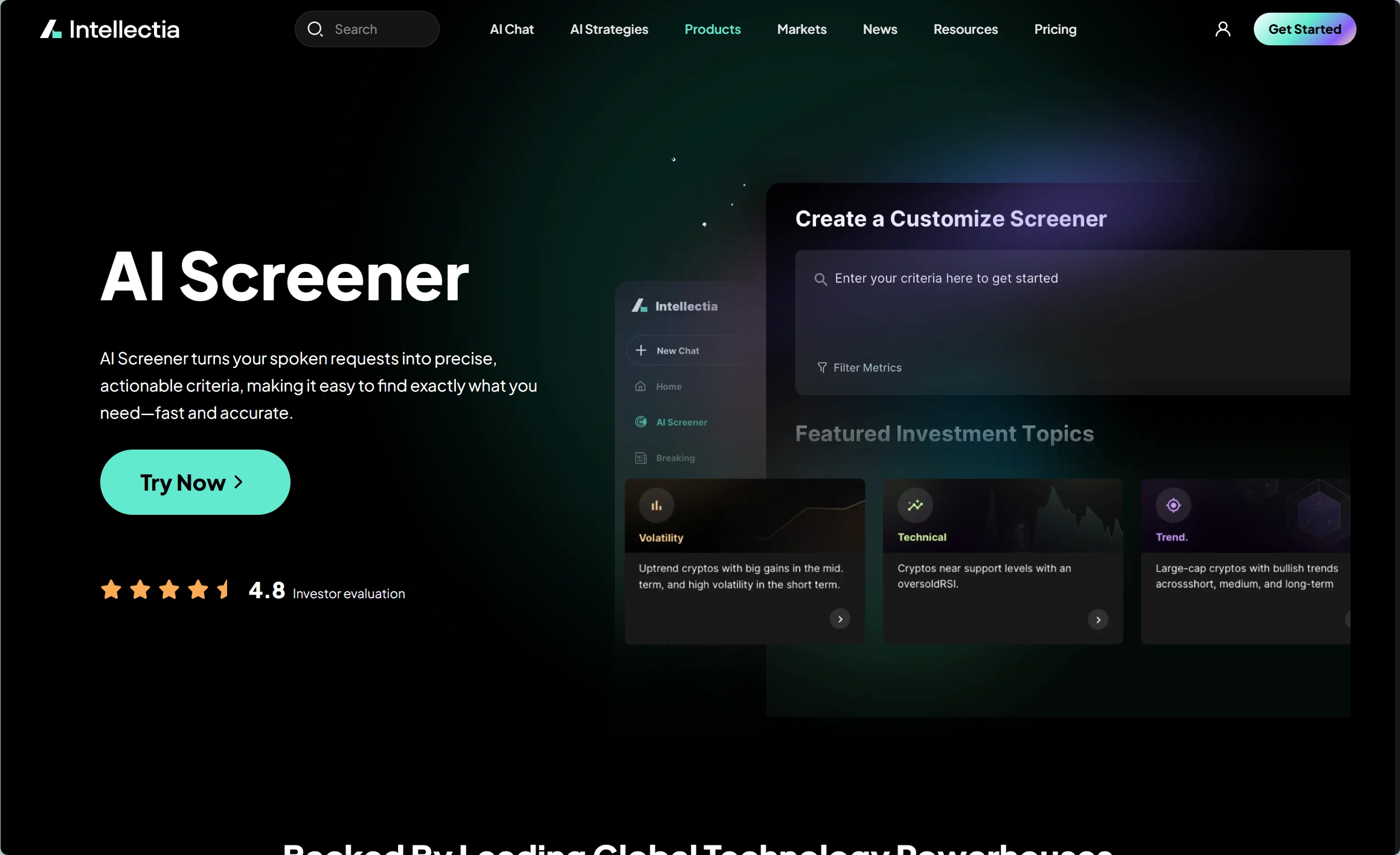

Want to find the next Nvidia before it rallies? Discover our AI Stock Picker that analyzes thousands of stocks using advanced machine learning to identify high-potential opportunities before they make headlines.

Current Stock Analysis: Reading the Technical Landscape

Nvidia's stock performance over the past six months illustrates the complex sentiment surrounding AI infrastructure plays. After reaching an all-time closing high of $207.03 in October 2025, NVDA has experienced a measured pullback to approximately $184 as of mid-March 2026—a decline driven by multiple converging factors rather than fundamental deterioration.

The primary headwind has been escalating export restrictions on China, which remains a significant market for Nvidia's data center products despite previous limitation rounds. Recent reports indicated that Nvidia suspended production of its H20 chips—specifically designed to comply with earlier U.S. regulations—following security concerns raised by Chinese authorities. While CEO Jensen Huang denied allegations that these chips contained security backdoors, emphasizing their commercial-only design, the episode highlights the geopolitical tightrope Nvidia must walk.

Margin pressure during the Blackwell architecture ramp has also weighed on near-term sentiment. New product transitions typically depress gross margins temporarily as manufacturing yields optimize and older generation inventory clears. However, historical patterns suggest these pressures resolve within two to three quarters, after which Blackwell's superior economics should drive margin expansion.

Institutional positioning presents a mixed but ultimately constructive picture. While some managers like Montrusco Bolton Investments reduced stakes by 11.8% in recent quarters, others including Mitsubishi UFJ Asset Management increased positions. Primecap Management maintains NVDA as its seventh-largest holding despite some portfolio rebalancing. This churn reflects normal profit-taking after the stock's 1,160% gain since January 2023 rather than conviction changes.

From a technical perspective, NVDA has established support in the $175-180 range, with resistance near $195-200. A convincing GTC catalyst could break this range to the upside, potentially triggering a momentum surge as algorithmic trading systems and retail investors pile into the move. Conversely, disappointment around the "world-surprising" announcement could test lower support levels near $170.

Understanding the Blackwell and Vera Rubin Architecture Transition

Nvidia's product roadmap has become a critical determinant of its stock performance, with each architecture generation driving multi-quarter revenue cycles. The current Blackwell ramp and upcoming Vera Rubin launch represent the most significant technology transitions in the company's history, with implications extending far beyond traditional GPU upgrades.

Blackwell, unveiled in 2024, introduced several innovations specifically designed for AI workloads at scale. The architecture features second-generation transformer engine technology, fifth-generation NVLink for improved multi-GPU communication, and advanced reliability features targeting enterprise deployment. However, the transition has not been without challenges—early production faced yield issues common to leading-edge semiconductor manufacturing, temporarily constraining supply.

More significantly, Blackwell established Nvidia's strategy of selling complete AI infrastructure systems rather than standalone chips. The GB200 NVL72 configuration, which combines 72 Blackwell GPUs with NVLink fabric in a single rack-scale system, commands premium pricing and dramatically expands Nvidia's addressable revenue per deployment. This systems-level approach also deepens customer lock-in, as migrating to alternative platforms requires replacing entire infrastructure footprints rather than simply swapping cards.

The Vera Rubin architecture, expected to take center stage at GTC 2026, promises another leap in capability. Beyond raw performance improvements, Rubin appears optimized specifically for inference workloads—the operational phase where trained AI models generate outputs. This focus matters because inference computing requirements grow proportionally with AI adoption, whereas training represents a more discrete market tied to model development cycles.

Industry sources suggest Rubin will deliver substantial efficiency gains, with projections of 10x lower token costs compared to current-generation hardware. If realized, this improvement would transform the economics of running large language models and other AI systems, potentially triggering an enterprise adoption wave similar to what cloud computing experienced in the early 2010s.

The competitive implications are profound. While rivals like AMD and Intel have made credible efforts to challenge Nvidia in AI acceleration, the combination of hardware performance, software ecosystem maturity (CUDA), and now full-system integration creates barriers that competitors struggle to overcome. Each successful architecture generation extends Nvidia's lead time, making catch-up increasingly difficult.

The AI Infrastructure Mega-Trend: Why Demand Keeps Growing

Understanding Nvidia's investment thesis requires appreciating the unprecedented scale of AI infrastructure build-out currently underway. According to Bridgewater analysis, major technology companies including Alphabet, Amazon, Meta, and Microsoft are projected to invest a combined $650 billion in AI-related capital expenditures during 2026—a 58% increase from the $410 billion spent in 2025.

This spending surge reflects a fundamental strategic shift among cloud providers and enterprise customers. AI capability is increasingly viewed as existential to competitiveness across virtually every industry. Companies that fail to deploy advanced AI risk being displaced by more efficient competitors, creating a classic prisoner's dilemma where rational actors over-invest to avoid falling behind.

Data center capacity constraints illustrate the demand intensity. JLL Research anticipates roughly 100 gigawatts of new data center capacity coming online between 2026 and 2030, representing $1.2 trillion in real estate asset value creation. Tenants will likely spend additional hundreds of billions on computing equipment, with AI-specific deployments accounting for the majority of this investment.

The energy implications are staggering. Current data center construction rates average $8.6 billion monthly in the United States alone, with 20 major projects breaking ground in January 2026. The White House recently convened tech giants to address power cost concerns, signaling recognition that electricity availability may become the binding constraint on AI infrastructure expansion.

Nvidia's positioning within this ecosystem is uniquely advantageous. As the dominant supplier of AI training and inference accelerators, the company captures value regardless of which AI models succeed or which cloud providers win market share. This "picks and shovels" dynamic insulates Nvidia from the winner-take-all risks facing individual AI application companies while exposing it to the sector's overall growth.

Furthermore, Nvidia has begun expanding beyond hardware into software platforms and AI services. The reported development of "NemoClaw"—an open-source AI agent platform—suggests strategic ambition to capture value at higher stack levels. Success in software would improve gross margins and create additional switching costs that protect hardware market share.

Ready to screen for the best AI infrastructure stocks? Our AI Screener lets you filter thousands of stocks by sector, growth metrics, and analyst ratings to find opportunities that match your investment strategy.

Competitive Landscape: Can Anyone Catch Nvidia?

Despite Nvidia's dominant position, competitive threats deserve serious consideration. AMD has made the most credible challenge, with its MI300 series accelerators gaining traction among hyperscalers seeking supply diversification. Microsoft and Meta have publicly committed to deploying significant AMD silicon alongside Nvidia hardware, providing the volume necessary for AMD to improve its software ecosystem.

However, the gap remains substantial. Nvidia's CUDA software platform represents nearly two decades of accumulated developer tools, libraries, and optimizations that competitors struggle to replicate. While AMD's ROCm platform has improved dramatically, most AI research and development still occurs on Nvidia hardware, creating a self-reinforcing cycle where the best talent and latest techniques emerge CUDA-first.

Intel's Gaudi accelerators have secured some enterprise wins, particularly among customers with existing Intel relationships. However, Gaudi has failed to gain meaningful traction among leading AI labs and hyperscalers, suggesting architectural limitations for the most demanding workloads.

Custom silicon represents a more serious long-term threat. Google TPUs, Amazon Trainium, and Microsoft's rumored Athena chip demonstrate that major customers have the resources and motivation to design alternatives. These captive solutions can optimize for specific workloads while avoiding Nvidia's premium pricing. However, custom chips require massive upfront investment and limit customers to their own infrastructure—tradeoffs that only the largest operators can justify.

The emerging competitive dynamic may resemble the CPU market of the 2000s, where Intel maintained dominant market share while AMD captured 15-25% of the market during competitive product cycles. Nvidia likely faces gradual share erosion but retains structural advantages that should preserve premium pricing and superior margins for years.

Wall Street Sentiment: Analyst Views and Price Targets

Professional analysts have maintained enthusiastic coverage of Nvidia despite recent stock weakness, with the consensus view treating the pullback as a buying opportunity rather than a warning signal. The current analyst distribution shows 38 Strong Buy ratings, 3 Hold recommendations, and just 2 Sell ratings—translating to 95% positive coverage.

Price target dispersion reveals the range of potential outcomes analysts envision. The median target of $265 implies 43% upside from current levels, while more aggressive forecasts like Tigress Financial's $360 target suggest the stock could nearly double. Even conservative estimates generally assume recovery toward $245, representing substantial returns for patient investors.

Valuation metrics require context given Nvidia's growth trajectory. The stock trades at elevated multiples compared to traditional semiconductor companies, but this premium reflects genuine differentiation in growth prospects and profitability. With net margins exceeding 50% in recent quarters, Nvidia generates economic returns that justify premium valuations unavailable to commodity chipmakers.

The key valuation question centers on sustainability. If AI infrastructure spending proves cyclical rather than secular—experiencing boom-bust patterns similar to historical semiconductor cycles—current multiples will compress painfully. However, if AI adoption follows the multi-decade trajectory of previous platform shifts like cloud computing or mobile internet, today's valuations may prove conservative.

Analysts broadly expect Nvidia to maintain pricing power despite competitive pressure. The combination of technological leadership, software ecosystem lock-in, and systems-level integration creates customer relationships that competitors struggle to disrupt. Even AMD's most optimistic projections assume capturing share primarily from Intel and custom silicon rather than displacing Nvidia in core AI workloads.

Risks and Challenges: What Could Go Wrong

No investment analysis is complete without honest assessment of downside scenarios. Nvidia faces several identifiable risks that could derail the bull case despite its current strengths.

Geopolitical escalation represents the most immediate concern. Further U.S. restrictions on China exports would eliminate a meaningful revenue stream, while potential Chinese retaliation against American technology companies could disrupt supply chains or create market access issues. The H20 suspension illustrates how quickly regulatory environments can shift, forcing product strategy pivots on short notice.

Technology transitions always carry execution risk. If the Blackwell ramp experiences additional delays, or if Vera Rubin fails to deliver promised efficiency gains, customers may accelerate evaluation of alternatives. Manufacturing complexity increases with each generation, raising the probability of yield issues or design flaws that constrain supply.

Competition, while currently manageable, could intensify faster than expected. AMD's improved execution, combined with substantial custom silicon investment from major customers, might erode Nvidia's pricing power more quickly than analysts project. Software ecosystem advantages persist but are not insurmountable—Microsoft and Google have the resources to build credible alternatives if sufficiently motivated.

Macroeconomic factors also warrant attention. The Federal Reserve's interest rate decisions influence valuation multiples for growth stocks, while broader economic weakness could pressure enterprise IT spending. AI infrastructure has proven relatively resilient to economic concerns thus far, but a severe downturn would eventually impact capital expenditure budgets.

Finally, there exists the possibility that AI demand proves less durable than current projections assume. If enterprise AI adoption stalls, or if model training requirements plateau, the infrastructure build-out could slow dramatically. While this scenario appears unlikely given current momentum, investors should size positions recognizing that the AI thesis, while compelling, remains unproven at current scale.

Conclusion: Is Nvidia a Buy Ahead of GTC 2026?

Nvidia enters its GTC 2026 conference with substantial momentum despite recent stock weakness. The combination of approaching product cycles, expanding AI infrastructure investment, and durable competitive advantages creates a compelling risk-reward profile for investors with appropriate time horizons.

The "world-surprising" chip teased by Jensen Huang could prove genuinely transformative if it delivers meaningful advances in agentic AI capability or optical computing integration. Even absent a dramatic reveal, the Vera Rubin architecture ramp and continued Blackwell adoption should drive revenue growth that exceeds current consensus estimates.

Wall Street's enthusiasm appears justified by fundamental business quality rather than speculative excess. Nvidia generates genuine economic profits at margins unmatched in the semiconductor industry, reinvests aggressively in R&D to extend technological leadership, and benefits from secular trends that show no signs of abating.

For investors seeking exposure to AI infrastructure growth, Nvidia remains the highest-conviction option despite its scale. The stock's pullback from all-time highs offers a more attractive entry point than has been available for months, with GTC potentially serving as the catalyst that reinvigorates upward momentum.

However, position sizing should reflect the genuine risks identified. Geopolitical tensions, competitive pressure, and technology transition challenges could all pressure the stock in coming quarters. Nvidia deserves a meaningful portfolio allocation for believers in the AI thesis, but concentration risk should be managed appropriately.

Ready to elevate your investment research? Join thousands of investors using intellectia.ai to access AI-powered stock analysis, real-time market insights, and professional-grade screening tools. Our platform combines advanced machine learning with comprehensive financial data to help you make smarter investment decisions. Start your free trial today and discover why sophisticated investors trust our AI to identify opportunities before the crowd.